Tech Regulation 2026: The Social Media Mental Health Trial Has the Potential to Transform Big Tech

Tech Regulation could change how every major social app is designed. If you’ve ever tried to get a teenager to put the phone down, you know the battle is real. Tech oversight has moved from debate to the courtroom, with real consequences in 2026.

In January 2026, a federal trial in Los Angeles commenced. Plaintiffs K.G.M., 20, and her mother are suing Meta (Instagram) and Google (YouTube). They point to features such as the infinite scroll, their notifications, and suggestions to cause addiction and severe mental health damage.

TikTok settled separately. Snap also reached an undisclosed agreement. Meanwhile, “bellwether” trials continue through June 2026 to see how juries respond, and to push broader settlements. What you probably want to know is simple: what could this force Big Tech to do, and how do you prepare for the changes? Let’s get into specifics.

What Tech Regulation Is Actually Being Argued

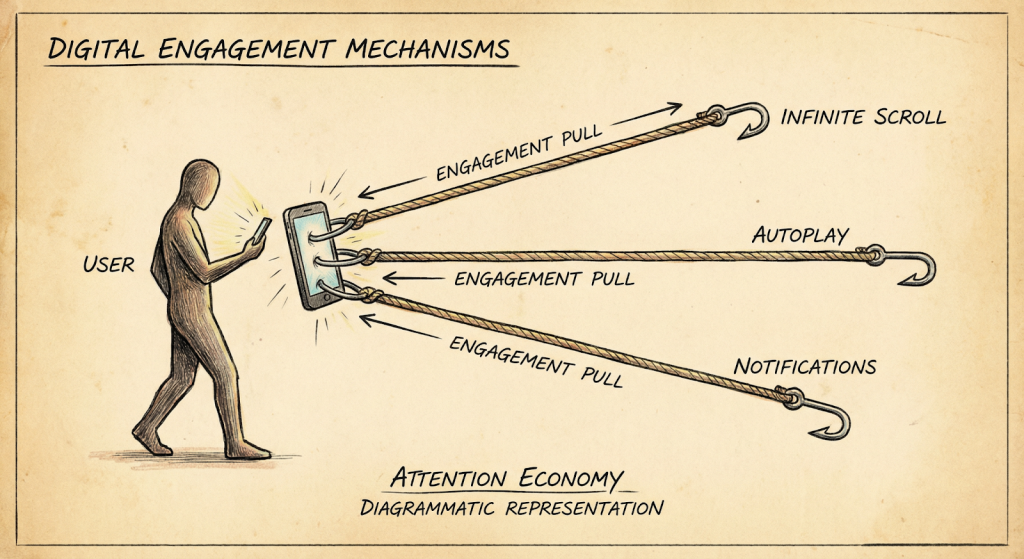

Alt text: Illustration of social media features pulling users in. This lawsuit focuses not on single posts, but on the overall platform design. Plaintiffs are pointing at a bundle of mechanics that keep people scrolling:

- Endless scroll and autoplay that remove natural stopping points

- Notifications that pull users back at the worst times

- Algorithms that amplify content are likely to keep users hooked

- Weak friction for sensitive topics, especially for minors

One line that’s been repeated in coverage of filings sums up their claim: the experience was “addictive by design” for young users, highlighting the role of Tech Regulation. That phrase matters because it turns a mental health story into a product liability story.

The K.G.M. Story and Tech Regulation

K.G.M. reports encountering sextortion through Instagram, having been a victim of cyberbullying, and subsequently becoming trapped in the circles of depressed and body-specific material, raising questions about Tech Regulation. Another point made in the complaint is that it took weeks before meaningful intervention.

In court, timing is everything. A platform can say, “We have rules.” But a jury is going to ask, “Did you act when it counted?”

This is also why bellwether cases exist. A strong jury response could pave the way for similar lawsuits.

The Data Behind Tech Regulation Lawsuit

There’s no single number that “proves” social media causes depression. Nevertheless, the trend lines are despicable, and policymakers are employing them.

Here are the figures that keep coming up in 2026 discussions:

- Heavy teen users rating their mental health as poor: 41%

Light users rating their mental health as poor: 23% - Depressed or suicidal youth with problematic use patterns: 40%

- Cyberbullying among children and adolescents: 34%

- Reported depression, anxiety, and stress correlated with use of SNS: r= 0.273 / r=0.348/ r=0.313.

- Depression rate increased from 2018 to 2023: 48%

For named research, two references are worth knowing:

First, a University of Manchester study in 2026 challenged simple “screen time equals harm” claims. However, it also noted no protective effects from heavy use. So the takeaway wasn’t “relax.” It was “focus on what’s happening inside the feed.”

Second, under the EU’s Digital Services Act, Tech Regulation requires Very Large Online Platforms to assess systemic risks, including mental well-being and addiction-style design. That’s not a blog opinion. That’s a legal requirement.

Why This Court Case Is Reshaping Platform Rules

Court cases do two things that normal “hearings” don’t.

They force discovery, and they force accountability.

Internal documents have reportedly shown that companies understood certain risks to minors, including predatory connections and harmful recommendations, yet engagement targets stayed dominant. If those documents land cleanly with a jury, it changes the industry’s safest talking point: “We didn’t know.”

At the same time, states are also drafting rules that seem to resemble courtroom remedies.

Another example is Minnesota, where Tech Regulation will require warning pop-ups on mental health, starting July 2026, warning search-related search terms and crisis hotlines, like 988. The state of California is also heading in that direction, with 42 state AGs on its side. And the U.S. Surgeon General, Vivek Murthy, has previously called for warning labels as well.

That’s the pincer movement: lawsuits on one side, legislation on the other.

What Meta and Google Say In Response

If you want to sound credible when you talk about this, you have to include the other side.

Meta and Google typically make several key arguments:

- Correlation isn’t causation. Many factors affect teen mental health.

- They provide tools, like time limits, restricted modes, and parental controls.

- Content recommendation is a protected speech activity in some interpretations.

- They take harmful content seriously, and they invest heavily in safety teams.

And to be fair, the causality question is real. Even the University of Manchester’s work is often cited as a reason to avoid simplistic blame.

Plaintiffs counter with this reasoning: the trial isn’t only about “screen time.” It’s about whether specific features predictably amplify harm for vulnerable users, and whether the companies responded fast enough when things went bad.

The Most Likely Outcomes of Tech Regulation

If you’re expecting “social media gets banned,” no. That’s not how this usually plays out.

The realistic outcomes are more boring. And more powerful.

Here’s what could actually change within 12 to 24 months if juries and settlements go a certain way:

- Design limits for minors

More breaks, fewer infinite surfaces, and clearer stopping points. - Notification guardrails

Fewer late-night pings for teen accounts. Less streak pressure. - Recommendation constraints

Stronger “do not keep feeding this” logic after signals of distress. - Faster response standards

Strict timelines for sextortion and self-harm reports. - Independent audits and proof

If you claim you reduced harm, you may have to show your work.

Here, the implications of Tech Regulation and tech oversight become tangible. It shows up as product requirements, staffing plans, and quarterly reports.

A Simple 7-day Readiness Plan

You don’t need a legal department to act smarter this week. Use this plan.

Day 1: Map the “hooks.”

List autoplay, infinite feeds, streaks, and push notifications.

Day 2: Set safer defaults for minors

If you run a product, make the safe choice the easy choice. If you’re a parent, move devices out of bedrooms at night.

Day 3: Add friction to sensitive content

Extra clicks are annoying. That’s the point.

Day 4: Fix escalation timing

Write a target like “respond within 24 hours” for sextortion reports. Track it.

Day 5: Log evidence

Screenshots, timestamps, and action taken. Quietly useful.

Day 6: Train moderators

Make sure they know what to do and when to involve law enforcement.

Day 7: Test the recommendations

Observing what the feed serves following sad, body-centered, or self-harm adjacent content. When it gets out of control, then you have to work.

If you work in games or creator teams, add one more step: build an audience you own, like email or Discord. I’ve watched algorithm changes nuke launches overnight.

Where AI Fits

Recommendation engines aren’t magic. They’re systems that optimize for engagement. That’s why youth safety keeps showing up in regulation headlines.

When you read the latest updates on AI compliance and social media trials, look for talk about auditing, risk testing, and limits for minors. Those ideas map directly onto what courts are weighing right now.

It’s also helpful to look back at AI regulation news today, 2025. A lot of that coverage focused on chatbots and generative media. In 2026, attention is shifting toward ranking and recommendation impact, because that’s where harm claims are easiest to demonstrate.

In the U.S., us ai regulation news often starts at the state level, then spreads. Minnesota’s pop-up warning rule is a good example of that “prototype” effect.

From inside a company, the practical angle is AI compliance news. Keeping clear records and tracking response times may seem tedious, but it’s quickly becoming standard practice. Watch AI regulation updates for anything that turns “best practice” into “required practice.”

And yes, AI policy regulation news today is increasingly about kids, not just privacy. That’s the direction of travel.

Track It Sanely

For solid context, I start with the Pew Research Center on teen habits, then I read the actual court reporting. In my notes, I save fresh court links, plus US AI regulation news, and I flag practical AI regulation updates. When I need a baseline, I revisit AI regulation news today, 2025. Then I skim AI policy regulation news today to see what might turn into real rules next for teams.

Final Takeaways

This trial won’t end social media. But it can change the default settings that made it so hard to look away.

If you only do three things, do these:

- Build safer defaults and faster escalation, especially for minors

- Track and document what happens when harm is reported

- Follow policy shifts without obsessing, just enough to adapt

Tech Regulation will keep tightening in 2026, whether the verdict lands this summer or the cases settle first.

If you found this useful, share it with someone who ships product features or runs a community, so they prep early. And if you’ve noticed changes tied to AI compliance news, drop what you’re seeing in the comments. It helps everyone stay ahead.

FAQs

Q1: What is the 2026 social media mental health trial?

It’s a federal case in Los Angeles. A young adult and her mum are suing Instagram and YouTube. They say the way these apps are designed hurts teen mental health. Basically, it’s about whether endless scrolling and pushy algorithms caused real harm.

Q2: What could happen if Big Tech loses?

If the plaintiffs win, things could change fast. Expect warnings popping up for teens, less endless scrolling, and smarter content suggestions. Platforms could also face penalties or big settlements. It won’t kill social media, but “engagement at any cost” might finally get a reality check.

Q3: How might this affect creators and businesses?

You might notice slower growth tricks. Notifications won’t be as pushy. Age checks may be stricter. Ads may not reach teens as easily. The fix? Build email lists and communities you control. That way, you’re not hostage to algorithms.

Q4: What can parents do right now?

Turn off late-night notifications. Set app time limits. Keep phones out of bedrooms. And start calm conversations early about bullying and sextortion. No lectures. Just practical check-ins.

Q5: Why is this connected to AI rules?

The feeds that cause the most harm are run by recommendation algorithms. That’s why regulators now want audits, risk checks, and proof that the systems actually reduce harm. It’s less about privacy and more about what the algorithm decides your kid sees next.